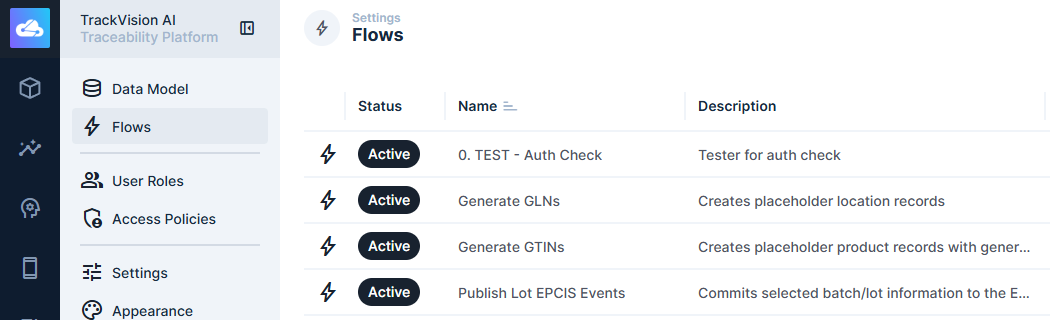

Flows

Flows are TrackVision's powerful automation engine. Each flow has a trigger that determines when it runs, and a chain of operations that execute in sequence. Data passes through the chain as a shared context — each operation can read the output of any previous step and append its own result.

Flows are configured through a visual canvas in Settings > Flows.

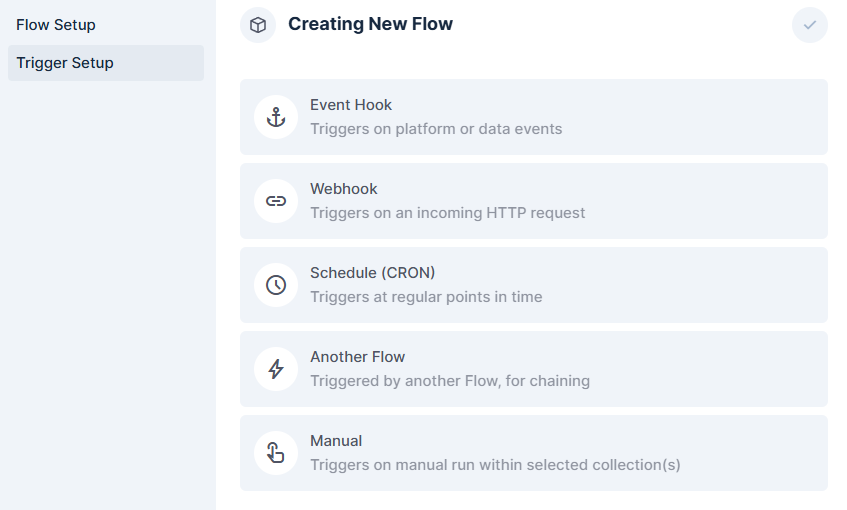

Triggers

The trigger defines what starts a flow. There are multiple options:

Event Hook

Fires when data changes in a collection — on item create, update, delete, or other platform events. Two modes:

- Blocking (Filter) — the flow runs synchronously before the database commits. You can inspect or modify the incoming payload, or throw an error to cancel the operation entirely. Useful for custom validation logic that should prevent bad data from being saved.

- Non-blocking (Action) — the flow runs asynchronously after the event completes. Useful for side-effects like sending notifications or syncing to external systems.

Event hooks allow you to develop event-driven architectures quickly and easily.

Schedule

Runs on a cron schedule. Uses 6-point cron syntax (second, minute, hour, day, month, day-of-week). Suitable for periodic tasks — data cleanup, report generation, timed synchronisation with external systems.

Webhook

Exposes an HTTP endpoint at /flows/trigger/:id that starts the flow when called. Can operate synchronously (waits for the flow to complete and returns the result) or asynchronously (responds immediately). GET requests support optional response caching. Useful for receiving data from external systems or triggering flows from your own applications.

Manual

Adds a button to collection item views in the UI. When a user selects one or more items and clicks the button, the flow runs with the selected item IDs available in the trigger context. Supports custom confirmation dialogs with input fields, and displays a toast notification when complete.

Another Flow

Allows one flow to call another, passing data and optionally receiving a response. Supports serial, parallel, or batch iteration modes when processing arrays.

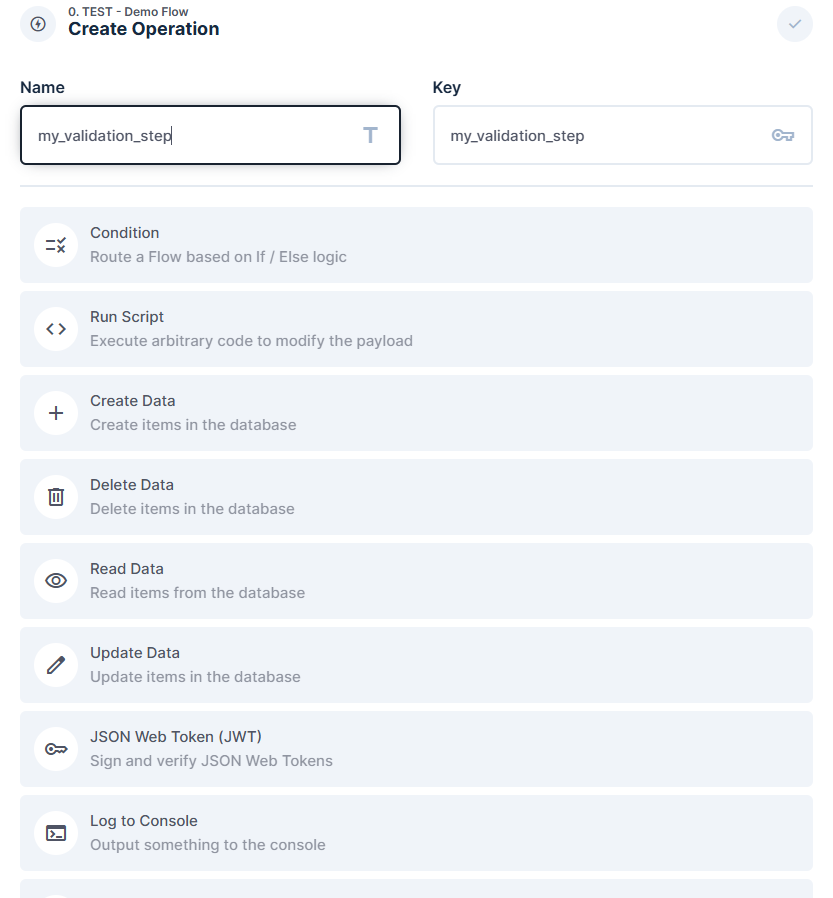

Operations

Operations are the steps in a flow. Each appends its result to the shared data chain under its own key, making it available to downstream steps.

Data Operations

Create Data — creates one or more items in a specified collection. Returns the IDs of the created items.

Read Data — retrieves items from a collection by ID or filter query. Returns the full item array.

Update Data — modifies existing items by ID or query. Supports single objects or batch arrays. Returns updated item IDs.

Delete Data — removes items from a collection. Returns the deleted item IDs.

Logic and Control

Condition — evaluates a filter rule and branches the flow into a success path or a reject path. If a tested field is missing from the payload, the condition takes the reject path regardless of the operator.

Trigger Flow — calls another flow and passes data to it. Execution modes: serial (one at a time), parallel (all at once), or batch (chunked). Can receive the called flow's response.

Sleep — introduces a delay in milliseconds before the next operation runs.

Throw Error — halts the flow with a custom error code and HTTP status. When used in a blocking event hook, this cancels the database transaction — effectively preventing the triggering operation from completing.

Scripting and Transformation

Run Script — executes arbitrary JavaScript or TypeScript in an isolated sandbox. The return value is appended to the data chain. The sandbox cannot access the file system or make network requests directly (use a Webhook/Request operation for HTTP calls).

Transform Payload — reshapes data by assembling a custom JSON payload from any combination of values in the current data chain. Useful for preparing data before sending it to an external API or another operation.

External Integrations

Webhook / Request URL — makes an outbound HTTP request (GET, POST, PATCH, DELETE) to any URL. Request headers, body, and query parameters can reference values from the data chain. The response is appended to the chain for use in downstream steps.

Send Email — sends an email to one or more recipients. Body can be written in a WYSIWYG editor, Markdown, or a saved template.

Send Notification — sends an in-platform notification to one or more TrackVision users by ID.

Utilities

Log to Console — writes a message to the server console and the flow's execution log. Useful during development and for audit trail entries.

JWT — signs, verifies, or decodes a JSON Web Token. Useful when integrating with external systems that require token-based authentication.

Data Chain

All operations share a single data object that grows as the flow executes. Key variables:

$trigger— the trigger's input payload (e.g. the item payload on an event hook, the HTTP body on a webhook)$accountability— the user and role that triggered the flow, carried through all operations$last— the output of the most recently executed operation- Individual operation outputs are also accessible by their operation key (e.g.

{{read_products.data}})

Dynamic values in operation fields are written using {{ }} syntax referencing these variables.

Execution Logs

Every flow run generates a log entry, accessible from the flow's detail page in the admin UI. Logs capture the trigger payload, each operation's input and output, and any errors. Log entries are persisted to the database — for high-volume flows, periodic log cleanup is recommended.

Example Use Cases

Validate data before save — use a blocking event hook with a Condition operation to check incoming payloads and Throw Error to reject records that don't meet your criteria.

Notify a team on a supply chain event — use a non-blocking event hook on an EPCIS event collection to send an email or in-platform notification when a specific event type is recorded.

Sync records to an external system — use a non-blocking event hook or schedule trigger to call an external REST API via Webhook/Request URL whenever records change or on a timed basis.

Bulk update triggered from the UI — use a Manual trigger so an operator can select a set of records and apply a status update across all of them in one action.

Scheduled data cleanup — use a Schedule trigger to find and delete or archive stale records on a nightly or weekly cron.

Chain complex logic across multiple flows — use Trigger Flow to break large automations into smaller, testable units that can be developed and maintained independently.